MindBytes Poster Gallery 2015

Great Match in Natural Language Processing in Big Data at Scale

Google n gram viewer is the most famous implementation of n gram. n gram is an essential tool for Natural Language Processing. Since the birth of the project, no one has not been able to compete with Google n gram

viewer or has not even tried to do so. On this project, the author challenges Google n gram viewer in terms of # of generated n gram words building up n gram from scratch with maximum support of multicore CPUs.

Bayesian variant-based pathway enrichment analysis using GWAS summary statistics

åCarbonetto and Stephens (2013) developed a multiple-SNP modeling approach that integrated pathway enrichment analysis with variant prioritization in enriched pathways, and demonstrated its potential to yield novel

biological insights into complex human traits and diseases. The method, however, is limited by the requirement of individual-level genotype and phenotype data, which are not widely available for large GWAS.

In contrast, single-SNP association summary statistics are often released in public domain. Here we present a new Bayesian method for multiple-SNP pathway analysis that relies solely on GWAS summary statistics

and linkage disequilibrium (LD) structure inferred from a public reference panel. Our method adopts a recently proposed large-scale Bayesian regression model for GWAS summary statistics (Zhu and Stephens, ASHG

2015). Unlike in previous work where each SNP was treated equally likely to be associated with the phenotype a priori, the new method allows the prior probability of each SNP being associated to depend on its

membership of a pathway so that potential enrichment of associations within the pathway can be captured. A parallel algorithm using mean-field variational approximation is developed to ensure scalability for

genome-wide applications. On summary statistics of 435,615 SNPs in a GWAS of Crohn's disease and 3,160 curated pathways from eight web databases, our method obtains results comparable to the analysis that used

individual-level data (Carbonetto and Stephens, 2013). The top-ranked pathways that show strong support for enrichment in Crohn's disease are IL12-mediated signaling, cytokine signaling, IL23-mediated signaling

and immune system (Bayes Factor = 2.63e9, 7.41e8, 5.25e8, 1.74e6). We also apply the method on the summary statistics of 1,064,575 SNPs in a GWAS of human height and 3,700 pathways, and identify highly enriched

gene sets that play important roles in bone biology, including Hedgehog signaling, RAC1 signaling and Y branching of actin filaments.

Testing Land Coverage Classification Algorithms for Optimizing Flood Detection in Hyperspectral Image Data

In remote sensing, hyperspectral imaging instruments provide data with potentially high predictive performance in image classification. However, the limited computational capabilities onboard these sensors do not

allow full utilization of the data. For instance, the algorithms onboard NASA’s Earth Observing-1 (EO-1) satellite are limited to using only 12 of 242 spectral bands due to data size. Project Matsu, a cloud-based

collaboration with NASA, makes processed hyperspectral data available to the public, within 24 hours of acquisition. Utilizing this framework facilitates fast access and computational power over the full dataset,

allowing us to test machine learning algorithms for land cover classification in hyperspectral data using all 242 bands to improve upon the existing water detection algorithms currently used onboard the satellite.

Using a diverse training set of hyperspectral data, we achieve a significant accuracy increase of 5%-20%.

Robust Prior Analysis and Detection of Significant Change

Our research is focused on analysis of future climate change and e ects of model misspecication on economic policy. In particular, the uncertainty in a climate component of linked economic-climate model a ects robust

energy/consumption policies. A decision maker wants to minimize regrets over the least favorable outcome possible resulting from policy applied over a set of climate models considered.

Scenes

Social scientists and others consider many types of contexts that can join in the scenes approach. But if the components have been used previously, scenes analysts join them together to create a new holistic synthesis.

That is a scene includes (1) Neighborhoods, rather than cities, metro regions, states/provinces, or nations. (2) Physical structures, such as dance clubs or shopping malls. (3) Persons, described according to

their race, class, gender, education, occupation, age, and the like. (4) Specific combinations of 1-3, and the activities which join them, like young tech workers attending a local punk concert. (5) These four

in turn express symbolic meanings, values defining what is important about the experiences offered in a place. General meanings include legitimacy, defining a right or wrong way to live; theatricality, an attractive

way of seeing and being seen by others; authenticity, a real or genuine identity. (6) Publicness – rather than the uniquely personal and private, scenes are projected by public spaces, available to passers-by

and deep enthusiasts alike. (7) Politics and policy, especially policies and political controversies about how to shape, sustain, alter, or produce a given scene, how certain scenes attract (or repel) residents,

firms, and visitors, or how some scenes mesh with political sensibilities, voting patterns, and specific organized groups, such as new social movements.

RA scalable and cost-effective method for measuring pharyngeal pumping under controlled conditions

C. elegans feeding consists of two pharyngeal motions: pumping and isthmus peristalsis. Pumping is typically quantified by counting the number of quasi-periodic contractions of the terminal bulb during a fixed short

period. Under ideal imaging conditions, i.e., high magnification and high spatial and temporal resolutions, automated detection of pharyngeal pumping can be achieved using intensity threshold-based machine vision.

However, such conditions require the dedication of significant resources to every animal, thus limiting the throughput of the assay. We employ a mixture of affordable optics and novel analysis to build a high-throughput

imaging and analysis pipeline. Models of regulatory strategies can potentially be tested using detailed experimental data and may assist in conceptualizing the data in terms of an optimality principle in feeding.

Non-universal star formation in turbulent interstellar medium

Recent observational evidence indicates variation of efficiencies at which giant molecular clouds (GMCs) convert their gas into stars. Consistent theory of galaxy formation must explain such variation. Common numerical

models, in which star formation efficiency (SFE) is assumed constant (at the level of few %) above certain density threshold, by their design are not suitable for this purpose. Theoretically, variation of SFE

is attributed to the turbulent nature of interstellar medium. Unfortunately, state-of-art galaxy formation models lack relevant small-scale motions due to limited resolution that hardly reaches typical scale

of the largest GMCs (few 10 pc). However, with an appropriate subgrid model of turbulence star formation can be connected to resolved dynamics with the aid of theoretical and numerical models of star formation

in turbulent medium. In this work we implement such model coupled with prescription for star formation in compressible MHD turbulence. We find that our model predicts distribution of r.m.s. turbulent velocities

consistent with local and extragalactic observations (on average few km/s on 100 pc scale). In our model turbulence is produced in warm gas (T ∼ 104 K) at level of few km/s and is amplified by compression in

spiral arms up to few tens km/s. As far as star formation is concerned, in our simulation we observe distribution of rates that is in a good agreement with both local GMCs data and resolved extragalactic star

formation maps. The resulting variation of efficiency is found to be due to scatter in turbulent properties. Our model predicts high abundance of molecular gas inefficiently forming stars along with existence

of very efficient GMCs.

In Silico Construction of a Host/Pathogen Patient Cohort Using HPC Parameter Sweeps on an Agent Based Model of Sepsis

Current predictive models for sepsis generally use correlative methods, and as such are limited in their individual precision due to patient heterogeneity and data sparseness. The use of computational modeling and

simulation can aid in the process of contextualizing data generated by complex systems in order to describe their behavior. Towards this end, we have performed a multi-dimensional parameter sweep on a previously

validated model of sepsis. Data from this parameter sweep has been used to construct an in silico cohort of patients, defined by parameters representing host health and microbial virulence, upon which further

studies and simulations can be performed to both understand the septic process and design putative interventions.

Merging novel imaging technologies to understand muscle dynamics in monkey mouths

Muscles can function in diverse ways to move the skeleton, and our lab examines on how muscles and skeletons work together to produce movements; we focus primarily on chewing and swallowing in primates. Here we

describe two new technologies that can aid in understanding anatomy and function: 1) XROMM (X-ray Reconstruction Of Moving Morphology), a tool for visualizing skeletal movements based on x-ray video and CT scanning;

and 2) contrast-enhanced CT scanning, which allows for accurate depiction of individual muscle fibers in addition to skeletal anatomy. Each of these techniques is computationally intensive in its own right,

and integrating them to gain a more complete understanding of musculoskeletal biomechanics is difficult. We faced problems with data volume (many files of different types, some of huge sizes), data management,

logistics of sharing sensitive and complex data, and analytical tools for processing our data. Inside the University, the RCC has customized hardware and software solutions for automatically downloading/archiving

our files, keeping each trial’s data organized, and expediting metadata entry for each trial. In support of our collaborative efforts outside of the University, the RCC has organized for secure, streamlined

access to our data for off-campus collaborators and automated a pipeline for using computational tools developed at other universities using our data.

Coupled charge transport in the Cl-/H+ antiporter

The chloride channels (ClC) are a family of proteins that transport Clacross membranes either as selective ion channels or secondary active Cl-/H+ antiporters. ClC-ec1, a prokaryotic homologue of ClC, uses Cl- gradient

to pump H+ thermodynamically uphill through the membrane, or vice versa. We have studied this ion exchange process by calculating free energy profile for migration of each ion through the protein channel. Free

energy calculation was done with a suite of multi-scale methods, ranging from a classical MD simulation to use of semi-quantum mechanical reactive model and the hybrid QM/ MM method. Here we report results on

the proton transport process and coordinated movement of Cl- ions through the external and internal gate regions. A Markov state model was constructed to connect the intermediate states, identified in the free

energy profile of Cl- transprot. The model showed a good agreement with experimental results of the the Cl- conduction rate and Cl-/H+ exchange ratio. Our results suggest a plausible mechanism for coupled ion

exchange.

Water Management in the U.S Southwest: A Systems View

N-body simulations are often used to study how dark matter self-organizes into a complex "cosmic web" of filaments and halos. However, the discrete nature of simulations makes it difficult to study the low-density

regions of the cosmic web. We developed a code that studies these regions by directly representing the continuous phase-space structure of a simulation's dark matter. This allows for the creation of ultra-high

resolution density maps, like the one shown.

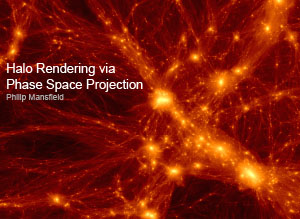

Halo Rendering via Phase Space Projection

N-body simulations are often used to study how dark matter self-organizes into a complex "cosmic web" of filaments and halos. However, the discrete nature of simulations makes it difficult to study the low-density

regions of the cosmic web. We developed a code that studies these regions by directly representing the continuous phase-space structure of a simulation's dark matter. This allows for the creation of ultra-high

resolution density maps, like the one shown.

Multiscale simulations reveal the proton pumping mechanism in cytochrome c oxidase

Cytochrome c oxidase (CcO) reduces oxygen to water and uses the released free energy to pump protons across the membrane, contributing to the transmembrane proton electrochemical gradient that drives ATP synthesis.

Herein, we provide a complete atomic level description of the key steps of the proton pumping mechanism in aa3-type CcO. We have used multiscale reactive molecular dynamics simulations to explicitly characterize

(with free energy profiles and calculated rates) the internal proton transport events that enable pumping and chemistry during a reaction step that involves proton transport to the pump loading site (PLS) and

to the catalytic site (binuclear center, BNC) (the A→PR→F transition). Our results show that both proton transport events are thermodynamically driven by electron transfer from heme a to the BNC, but that pumping

(amino acid residue E286 to the PLS) is kinetically favored, while transfer of the chemical proton (E286 to the BNC) is rate-limiting. The calculated rates are in quantitative agreement with experimental measurement.

The back flow of the pumped proton from the PLS to E286 is prevented by the fast reprotonation of E286 through the D-channel and a large free energy barrier for the back flow reaction. Proton transport through

the D-channel is not rate-limiting during the A→PR→F transition, but is strongly coupled to solvation changes across the N121-N139 asparagine gate. Our results also show how the D-channel biases unidirectional

proton transport from the inner to outer side of the membrane.

Predicting Chicago Real Estate Market Absorption

Opportunities to support urban economic decision-making with analytical models are extensive in the real estate market. Both buyers and sellers face uncertainty in real estate transactions in large metropolitan

areas about when to time a transaction and at what cost. A housing demand index based on microscopic home showings events data can provide decision-making support for buyers and sellers on a very granular time

and spatial scale. In the current real estate market, both buyers and sellers make decisions without knowing the present and future state of the large and dynamic real estate market. Consequently, accurate and

granular housing market demand forecasts play a valuable role in these decisions. In this paper, we aim to predict housing market demand by developing housing demand indices using high-volume, high-velocity

data on home showings, listing events, and historic sales data. By employing a combination of traditional market measures supplemented by the number of home showings, the indices result in timely insight into

housing market demand. We demonstrate our analysis using data from seven million individual records sourced from a unique, proprietary dataset that has not previously been explored in application to the real

estate market. We then employ a series of predictive models to estimate current and forecast future housing demand. Specifically, we first develop a shorter-term market demand heat index that predicts housing

demand for the subsequent week using only past weekly market demand and home showings data.

Integrating big data analysis and visualization

Language is manifested at multiple, interconnected levels of structure: letters/sounds, words, phrases, etc. How can linguistic structure be learned? With the availability of large datasets (e.g., text corpora,

transcribed speech collections), we develop unsupervised approaches to learning linguistic structure from unstructured data, which has important implications for both the scientific study of language and language

technologies. We are constructing Linguistica 5, a software with a graphical user interface that integrates linguistic data analysis and visualization.

Organic solar cell models predict how structures change properties

Solar cell devices based upon organic electronics possess many advantages relative to traditional silicon photovoltaics, including potentially inexpensive manufacturing, band gaps which are tunable through chemical

modification, and high optical absorption coefficients. Recent progress in generating improved polymers for organic solar cells has suggested that precise electronic energy level alignment between multiple donor

polymers (e.g. PTB7, PID2, etc.) significantly improves device performance. The effect of specific morphologies on device efficiency is difficult to determine experimentally, but our theoretical models can directly

probe how structural changes affect electronic properties, such as band locations and band gaps. Using periodic plane wave DFT and many body perturbation theory (G0W0) calculations, we calculated the dependence

of electronic states on order parameters such as backbone dihedrals. Hybrid density functional theory, in particular, performs well for these systems. Our results suggest that accurate description of the device

performance must include a description of local disorder. In particular, the potential energy scans we performed suggest multiple possible configurations which differ significantly in electronic structure. Using

the lowest energy conformations, our alignment of electronic energy levels is in good agreement with experimental values.

Mean-variance Optimization for Equity Portfolio Selection

This research explores mean-variance optimization for stock portfolio modeling on high-dimensional datasets. The availability of data allows for average investors to obtain large datasets for use in making investment

decisions. The two step model used Sortino Ratio ranking and then mean-variance optimization to select a portfolio of stocks. The resultant portfolio outperformed the benchmark (S&P 500) both in-sample, and

out-of-sample

Predicting Financial Market Direction Using Social Media Data

This research project is a study of investor sentiment as derived from social media content collected using RCC infrastructure and its ability to predict short-term stock market direction. The team choose S&P 500

and Russell 2000 indices to represent the stock market for this project. The study involves using cutting edge Text Analytics/ Natural Language processing techniques to analyze articles posted on financial social

media websites and derive daily ‘Sentiment Measures’ from it. The results indicate that these ‘Sentiment Measures’ have strong short term relationship with Russell 2000 Index. On the other hand, there were no

strong evidence of short term relationship between sentiment measures and S&P 500 Index. Using these Sentiment measures, the research team was able to predict the Russell index direction for the next trading

day with an 80% accuracy rate.

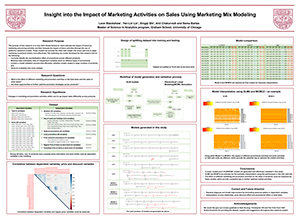

Insight into the Impact of Marketing Activities on Sales Using Marketing Mix Modeling

The purpose of this research is to help HAVI Global Solutions’ client estimate the impact of historical marketing and pricing activities and then forecast the impact of future activities through the use of predictive

statistical models. These models can provide the client with insight into where and how to apply marketing investment dollars more effectively. The marketing mix model developed for this research will do the

following: Correctly identify the cannibalization effect of promotions across different products; Minimize data collinearity risks of independent variables (such as different types of promotions); Contain a

model validation process that efficiently validates models related to large numbers of products, and Allow for scalability into more markets.

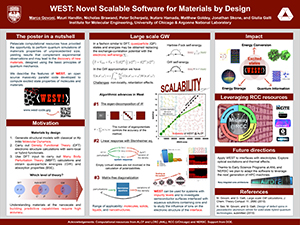

WEST: Novel Scalable Software for Materials by Design

Petascale computational resources have provided the opportunity to perform quantum simulations of materials properties of unprecedented size, yielding results that complement experimental observations and may lead

to the discovery of new materials, designed using the basic principles of quantum mechanics. Density functional theory (DFT) is one of the main tools used in first principle simulations of materials, but several

of the current approximations of exchange and correlation functionals do not provide the level of accuracy required for predictive calculations of electronic properties, such as photoemission and absorption

spectra. Many-Body Perturbation Theory (MBPT) offers an improved level of accuracy, but it is more computationally demanding than DFT and often difficult to apply for realistic materials including disorder,

defects, interfaces or nanostructured solids. We describe the features of WEST, an open source massively parallel code to compute excited state properties of molecules and materials (www.west-code.org), which

is scalable up to ~32k BG/Q nodes. We will discuss the electronic structure of systems relevant to solar energy conversion processes obtained with WEST, as well as the parallel performance of the code.

Beyond-DFT Electronic Structure: Spin-Orbit Coupling and Surface Defect Calculations

While density functional theory (DFT) is widely used, it gives large errors for several properties, such as semiconductor band gaps. The GW approximation to the Dyson equation is a very successful method which begins

with a DFT calculation and computes corrections based on many-body theory. GW calculations have traditionally been extremely challenging to perform for large systems, but newly developed algorithms and a high

performance code developed by some of the authors, WEST, greatly improve the efficiency of GW calculations. Here, we present extensions and applications of the WEST code. First, spin-orbit coupling is included

in calculations, so that solids and nanoparticles containing heavy elements such as gold and lead can be calculated with much better accuracy. Second, we use WEST to refine the simulation of a dangling bond

on a hydrogen-passivated silicon surface. This system shows promise for quantum information applications, and it is also an intermediate step toward the fabrication of complex atomic-scale silicon devices.